To find out if we can actually trust the software designed to protect us, I built a gauntlet to test the top 32 AI image detectors on the market. I didn’t just give them easy, raw files; I tested them against disguised AI, and against heavily stylized human art from 2012, before the AI revolution, to see where the algorithms break.

The market for AI image detection is heavily fractured. I ran 32 detectors through my gauntlet, and the data shows that relying on a random web tool to verify digital truth is a statistical gamble. Most detectors are not identifying AI; they are identifying surface noise, and some will even hallucinate evidence to justify a wrong answer. Here is the breakdown of the methods, the findings, and the definitive 2026 tier list.

The Methodology

To test the current landscape, the experiment utilized three distinct stress tests to measure false negatives, false positives, and the validity of visual proof. To ensure the tests were not skewed by file quality, all images used were at least medium resolution, with the 100% human-made art provided in high resolution to eliminate compression artifacts as a variable. I initially began with a larger pool of tools, but several were disqualified after they froze in an endless processing cycle when faced with the intentional digital noise of the Texture Trap.

-

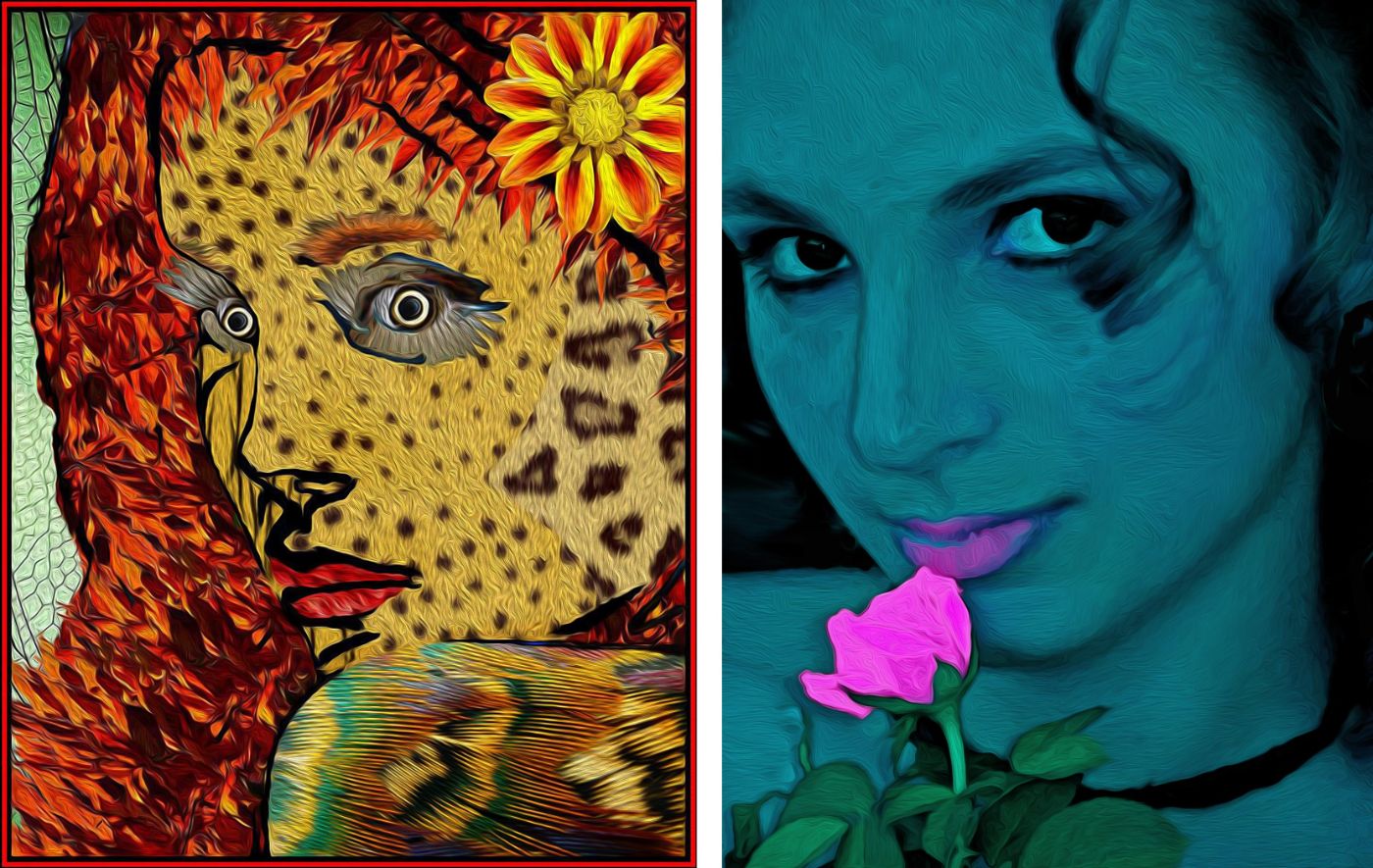

Test 1: The Texture Trap (False Negatives): Based on artwork I owned so as not to infringe on other artists, I generated a 100% synthetic recreation of the image using DALL-E 3. To simulate intentional digital noise and common bypass techniques, I applied a “Fine Fabric Texture” overlay to inject a layer of simulated physical grain. The goal was to see which tools analyzed structural geometry and which were distracted by surface-level noise. By the way, this is a cheap trick.

-

Test 2: The Time Capsule (False Positives): I fed the surviving detectors 100% human-made digital art created in 2012—years before modern generative AI existed. These images utilized manual collage techniques and standard Photoshop tools like the Oil Paint filter. The goal was to see if the algorithms could distinguish between intentional human stylization and machine hallucination.

-

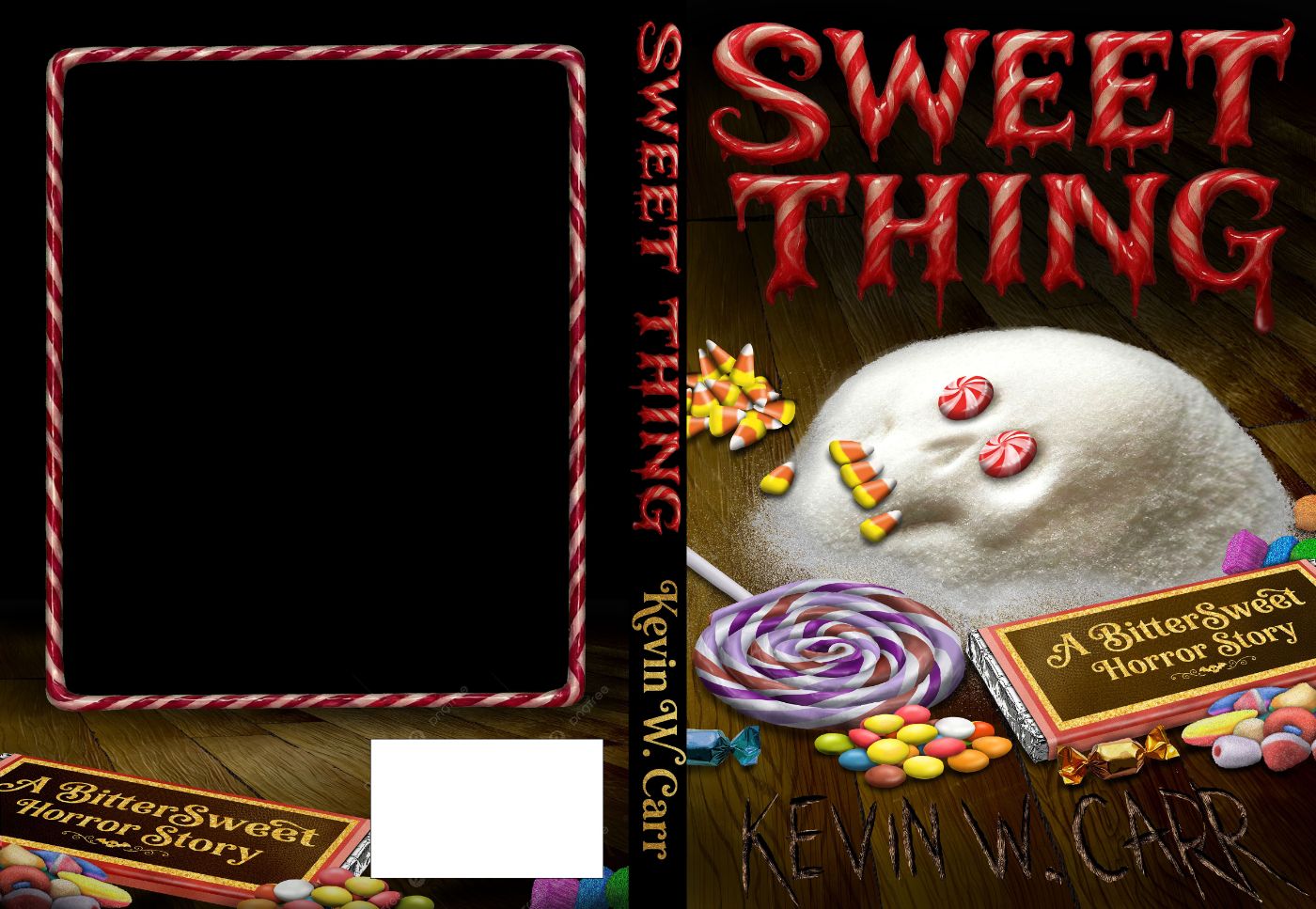

Test 3: The Graphic Design Audit (Visual Proof): I uploaded a 100% human-made book cover featuring flat vector space, text, and a complex central illustration. The goal was to test tools that provide “heatmaps” to see if their localized forensic evidence was actually tied to structural failures.

The Findings in Perspective

The results exposed fundamental flaws in how these systems operate. The market is defined by a “U-Shaped” failure curve, with a dangerous anomaly at the top.

1. The Bottom 50% Are Noise Detectors

Over a third of the detectors tested confidently labeled the 100% AI redwood image as “Human.” They fell completely for the fabric texture. These low-end tools operate on a positive-feature bias: they scan for high-resolution textures, standard color palettes, and grain. When they saw the fabric overlay, they stopped looking for the “melted” anatomy beneath it. They are effectively blind to modern diffusion math.

2. The Elites Are Blind to Art

The high-end detectors—the ones running powerful Vision Transformers like DINOv3 or utilizing semantic logic to spot structural errors—easily saw through the fabric texture to catch the AI. However, they failed spectacularly on the 2012 human art. Because these elite tools are trained to hunt for “logical inconsistencies” and “algorithmic curves,” they mistakenly flagged manual collage seams as spatial reasoning failures, and standard Photoshop oil filters as generative noise. They cannot tell the difference between a machine making a mistake and a human artist making a stylistic choice.

3. The Danger of Hallucinated Evidence

The most alarming discovery occurred during the Graphic Design Audit. Tools that offer “X-ray visuals” (like Copyleaks) were initially praised for transparency. However, when fed the human-made book cover, Copyleaks not only falsely flagged it as AI, but it generated a nonsensical heatmap. It highlighted flat, empty background spaces and a candy cane border as “synthetic” while ignoring the complex central illustration. This reveals that some “Elite” tools do not just guess the verdict; they will hallucinate visual evidence to justify a false positive.

Why is this important? If you have ever spent hours working on a piece of art to have a program tell you it is fake, you would understand. The truth is that even the best AI detectors are no better than lie detectors, which don’t meet any semblance of definitive proof. This is why lie detectors are not accepted as evidence in a court of law.

The 2026 Detector Rankings: A Map of Flawed Algorithms

After pushing 32 tools through the three stress tests, here is how the market actually stacks up.

Tier 1: The Lone Survivor (With Caveats)

· The Contender: AIPhotoCheck

· The Reality: It is the only tool that survived both the Texture Trap (catching the redwood) and the Graphic Design Audit (correctly passing the human book cover). Because it uses semantic logic rather than arbitrary heatmaps, its reasoning is sounder. However, it still tripped over the 2012 abstract collages, proving that even the best tool cannot safely evaluate heavily stylized or abstract human art.

Tier 2: The Industrial Black Boxes (The Math Geeks)

· The Contenders: Hive Moderation, WriteHuman, ZeroGPT, DeepAI, and the “98% Club.”

· The Reality: They are ruthless at catching modern AI. If it has DALL-E or Midjourney math, they will flag it with 99% certainty, ignoring any fabric overlays. But because they are purely mathematical, they completely failed the 2012 art test. They saw the repeating patterns of a 2012 Photoshop filter and confidently branded human art as a machine generation. They offer no auditable proof.

Tier 3: The Hallucinators and Guessers

· The Contenders: Copyleaks, DupliChecker, Arting, Scanly, ImageWhisperer.

· The Reality: This tier contains tools that hover between 45% and 75% confidence (Probability Soup), relying on shallow metadata. It also now contains Copyleaks. While Copyleaks caught the raw AI, its failure on human graphic design—and its generation of a completely arbitrary, hallucinated heatmap to justify that failure—makes its visual evidence actively dangerous for professional verification.

Tier 4: The Bottom Feeders (The Surface Scanners)

· The Contenders: MyDetector, Decopy, BrandWell, QuillBot, wedetect.ai.

· The Reality: Functionally blind. They spectacularly failed the redwood test, labeling 100% AI as “Human” simply because the fabric texture looked like a real photograph. Ironically, they passed the 2012 Time Capsule test. They did not pass because they are advanced; they passed because the bar for their detection is so low that they cannot see anything that isn’t an unedited, glaringly obvious AI generation.

The Final Takeaway

There is currently no silver bullet for AI detection. The bottom half of the market is easily fooled by basic Photoshop textures, and the top half will fail with highly stylized or non-typical art, with some hallucinating evidence to accuse human graphic designers of faking their work.

AI detectors are currently signature catchers, not truth tellers. Visual forensics is a broken compass unless paired with hard metadata. If you are a digital artist using heavy stylization, collage, or abstract concepts, the “best” AI detectors in the world are currently your biggest threat. They are programmed to hunt for algorithmic perfection and spatial logic, meaning the messier and more abstract your human art is, the more likely a machine will accuse you of faking it.

Coming up, a look at the AI writing detectors.

Next: The AI Illusion (Part 3): Testing the Lies of the Lie Detectors

:::info

Disclaimer: This article outlines the findings of a qualitative forensic audit. It is not a quantitative academic study, and the conclusions are analytical interpretations of specific algorithmic stress tests rather than definitive statistical failure rates. However, the data presented is representative of my direct investigation and interpretation of the evidence. All platform results, false positives, and hallucinated metrics are fully documented and archived.

:::

:::info

While I did use original art from a unreleased book, this is not an advertisement for that book. It is simply the art I had available because I made it.

:::