This post is cowritten by David Stewart and Matthew Persons from Oumi.

Fine-tuning open source large language models (LLMs) often stalls between experimentation and production. Training configurations, artifact management, and scalable deployment each require different tools, creating friction when moving from rapid experimentation to secure, enterprise-grade environments.

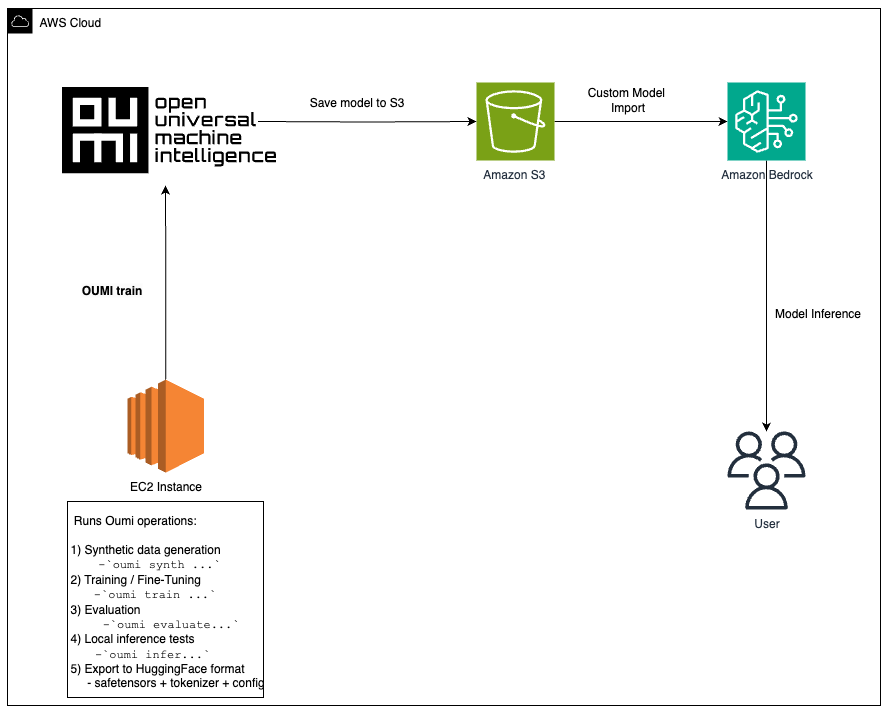

In this post, we show how to fine-tune a Llama model using Oumi on Amazon EC2 (with the option to create synthetic data using Oumi), store artifacts in Amazon S3, and deploy to Amazon Bedrock using Custom Model Import for managed inference. While we use EC2 in this walkthrough, fine-tuning can be completed on other compute services such as Amazon SageMaker or Amazon Elastic Kubernetes Service, depending on your needs.

Benefits of Oumi and Amazon Bedrock

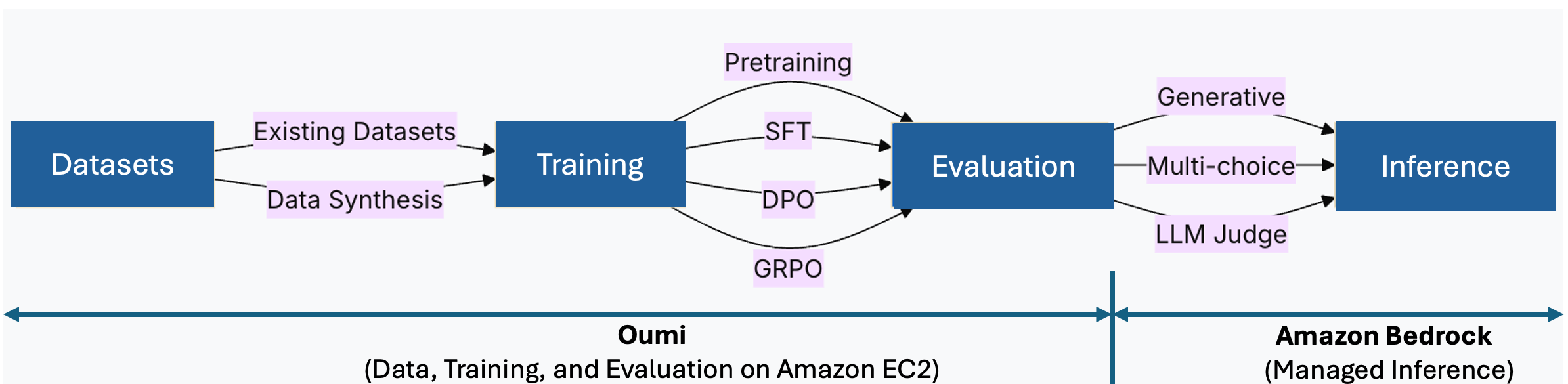

Oumi is an open source system that streamlines the foundation model lifecycle, from data preparation and training to evaluation. Instead of assembling separate tools for each stage, you define a single configuration and reuse it across runs.

Key benefits for this workflow:

- Recipe-driven training: Define your configuration once and reuse it across experiments, reducing boilerplate and improving reproducibility

- Flexible fine-tuning: Choose full fine-tuning or parameter-efficient methods like LoRA, based on your constraints

- Integrated evaluation: Score checkpoints using benchmarks or LLM-as-a-judge without additional tooling

- Data synthesis: Generate task-specific datasets when production data is limited

Amazon Bedrock complements this by providing managed, serverless inference. After fine-tuning with Oumi, you import your model via Custom Model Import in three steps: upload to S3, create the import job, and invoke. No inference infrastructure to manage. The following architecture diagram shows how these components work together.

Figure 1: Oumi manages data, training, and evaluation on EC2. Amazon Bedrock provides managed inference via Custom Model Import.

Solution overview

This workflow consists of three stages:

- Fine-tune with Oumi on EC2: Launch a GPU-optimized instance (for example, g5.12xlarge or p4d.24xlarge), install Oumi, and run training with your configuration. For larger models, Oumi supports distributed training with Fully Sharded Data Parallel (FSDP), DeepSpeed, and Distributed Data Parallel (DDP) strategies across multi-GPU or multi-node setups.

- Store artifacts on S3: Upload model weights, checkpoints, and logs for durable storage.

- Deploy to Amazon Bedrock: Create a Custom Model Import job pointing to your S3 artifacts. Amazon Bedrock provisions inference infrastructure automatically. Client applications call the imported model using the Amazon Bedrock Runtime APIs.

This architecture addresses common challenges in moving fine-tuned models to production:

| Challenge | Oumi and Amazon Bedrock solution |

| Iteration speed | Oumi’s modular recipes enable rapid experimentation across configurations |

| Reproducibility | S3 stores versioned checkpoints and training metadata |

| Scalable inference | Amazon Bedrock scales automatically without manual GPU provisioning |

| Security and compliance | AWS Identity and Access Management (IAM), Amazon Virtual Private Cloud (VPC), and AWS Key Management Services (KMS) integrate natively |

| Cost optimization | Amazon EC2 Spot Instances for training; Amazon Bedrock custom model 5-minute interval pricing for inference |

Technical implementation

Let’s walk through a hands-on workflow using the meta-llama/Llama-3.2-1B-Instruct model as an example. While we selected this model since it pairs well with fine-tuning on an AWS g6.12xlarge EC2 instance, the same workflow can be replicated across many other open source models (note that larger models may require larger instances or distributed training across instances). For more information, see the Oumi model fine-tuning recipes and Amazon Bedrock custom model architectures.

Prerequisites

To complete this walkthrough, you need:

- An AWS account with permissions to use EC2, S3, and Custom Model Import in your target AWS Region (we use

us-west-2). For the list of supported Regions, see the Amazon Bedrock documentation. - An IAM role configured with credentials. The role must allow reading and writing model artifacts in S3 and creating a Custom Model Import job.

- AWS Command Line Interface (AWS CLI) version 2 or later, configured with credentials, to run the model import job and invoke the model.

- A Hugging Face account and access token for gated model weights (for example, meta-llama/Llama-3.2-1B-Instruct).

- The companion source code repository: github.com/aws-samples/sample-oumi-fine-tuning-bedrock-cmi

Set up AWS Resources

- Clone this repository on your local machine:

git clone https://github.com/aws-samples/sample-oumi-fine-tuning-bedrock-cmi.git

cd sample-oumi-fine-tuning-bedrock-cmi- Run the setup script to create IAM roles, an S3 bucket, and launch a GPU-optimized EC2 instance:

./scripts/setup-aws-env.sh [--dry-run]The script prompts for your AWS Region, S3 bucket name, EC2 key pair name, and security group ID, then creates all required resources. Defaults: g6.12xlarge instance, Deep Learning Base AMI with Single CUDA (Amazon Linux 2023), and 100 GB gp3 storage. Note: If you do not have permissions to create IAM roles or launch EC2 instances, share this repository with your IT administrator and ask them to complete this section to set up your AWS environment.

- Once the instance is running, the script outputs the SSH command and the Amazon Bedrock import role ARN (needed in Step 5). SSH into the instance and continue with Step 1 below.

See the iam/README.md for IAM policy details, scoping guidance, and validation steps.

Step 1: Set up the EC2 environment

Complete the following steps to set up the EC2 environment.

- On the EC2 instance (Amazon Linux 2023), update the system and install base dependencies:

sudo yum update -y

sudo yum install python3 python3-pip git -y- Clone the companion repository:

git clone https://github.com/aws-samples/sample-oumi-fine-tuning-bedrock-cmi.git

cd sample-oumi-fine-tuning-bedrock-cmi- Configure environment variables (replace the values with your actual region and bucket name from the setup script):

export AWS_REGION=us-west-2

export S3_BUCKET=your-bucket-name

export S3_PREFIX=your-s3-prefix

aws configure set default.region "$AWS_REGION"- Run the setup script to create a Python virtual environment, install Oumi, validate GPU availability, and configure Hugging Face authentication. See setup-environment.sh for options.

./scripts/setup-environment.sh

source .venv/bin/activate- Authenticate with Hugging Face to access gated model weights. Generate an access token at huggingface.co/settings/tokens, then run:

hf auth loginStep 2: Configure training

The default dataset is tatsu-lab/alpaca, configured in configs/oumi-config.yaml. Oumi downloads it automatically during training, no manual download is needed. To use a different dataset, update the dataset_name parameter in configs/oumi-config.yaml. See the Oumi dataset docs for supported formats.

[Optional] Generate synthetic training data with Oumi:

To generate synthetic data using Amazon Bedrock as the inference backend, update the model_name placeholder in configs/synthesis-config.yaml with an Amazon Bedrock model ID you have access to (e.g. anthropic.claude-sonnet-4-6). See Oumi data synthesis docs for details. Then run:

oumi synth -c configs/synthesis-config.yamlStep 3: Fine-tune the model

Fine-tune the model using Oumi’s built-in training recipe for Llama-3.2-1B-Instruct:

./scripts/fine-tune.sh --config configs/oumi-config.yaml --output-dir models/final [--dry-run]To customize hyperparameters, edit oumi-config.yaml.

Note: If you generated synthetic data in Step 2, update the dataset path in the config before training.

Monitor GPU utilization with nvidia-smi or Amazon CloudWatch Agent. For long-running jobs, configure Amazon EC2 Automatic Instance Recovery to handle instance interruptions.

Step 4: Evaluate model (Optional)

You can evaluate the fine-tuned model using standard benchmarks:

oumi evaluate -c configs/evaluation-config.yamlThe evaluation config specifies the model path and benchmark tasks (e.g., MMLU). To customize, edit evaluation-config.yaml. For LLM-as-a-judge approaches and additional benchmarks, see Oumi’s evaluation guide.

Step 5: Deploy to Amazon Bedrock

Complete the following steps to deploy the model to Amazon Bedrock:

- Upload model artifacts to S3 and import the model to Amazon Bedrock.

./scripts/upload-to-s3.sh --bucket $S3_BUCKET --source models/final --prefix $S3_PREFIX

./scripts/import-to-bedrock.sh --model-name my-fine-tuned-llama --s3-uri s3://$S3_BUCKET/$S3_PREFIX --role-arn $BEDROCK_ROLE_ARN --wait- The import script outputs the model ARN on completion. Set

MODEL_ARNto this value (format:arn:aws:bedrock:<REGION>:<ACCOUNT_ID>:imported-model/<MODEL_ID>). - Invoke the model on Amazon Bedrock

./scripts/invoke-model.sh --model-id $MODEL_ARN --prompt "Translate this text to French: What is the capital of France?"- Amazon Bedrock creates a managed inference environment automatically. For IAM role set up, see bedrock-import-role.json.

- Enable S3 versioning on the bucket to support rollback of model revisions. For SSE-KMS encryption and bucket policy hardening, see the security scripts in the companion repository.

Step 6: Clean up

To avoid ongoing costs, remove the resources created during this walkthrough:

aws ec2 terminate-instances --instance-ids $INSTANCE_ID

aws s3 rm s3://$S3_BUCKET/$S3_PREFIX/ --recursive

aws bedrock delete-imported-model --model-identifier $MODEL_ARNConclusion

In this post, you learned how to fine-tune a Llama-3.2-1B-Instruct base model using Oumi on EC2 and deploy it using Amazon Bedrock Custom Model Import. This approach gives you full control over fine-tuning with your own data while using managed inference in Amazon Bedrock.

The companion sample-oumi-fine-tuning-bedrock-cmi repository provides scripts, configurations, and IAM policies to get started. Clone it, swap in your dataset, and deploy a custom model to Amazon Bedrock.

To get started, explore the resources below and begin building your own fine-tuning-to-deployment pipeline on Oumi and AWS. Happy Building!

Learn More

Acknowledgement

Special thanks to Pronoy Chopra and Jon Turdiev for their contribution.